A strategy for implementing test automation from scratch in a complex system

Several years ago I worked for a telco company, a more or less R&D branch of it. From the several roles I had, my latest one was being a "technology consultant". I was part of a centralized team whose focus was processes, trending technologies, tooling, CI/CD, etc.

It was quite interesting because I could try new things and help others. On the other hand, it was also quite challenging because the company was highly hierarchized and most of the teams were working in waterfall, for highly complex products, with a long history and... old technologies. I loved working there, since there were a bunch of departments working in quite different things: messaging systems, intelligent networks, mobile and TV apps, hardware equipments and more.

The context

At a certain point, I was assigned the task of helping an "external" team implementing successfully test automation on fiber optics "solutions" (we'll come back to this).

To give a bit more background, this team was a testing team, part of a broader testing team that in turn was part of a department. The department had several development teams, mostly hardware related but also software related. Each software team was working in a particular area; some in the CLI (command line interface) of the equipment, some in the management systems, some in the firmware, etc. When a customer bought a product, they were buying the whole stack of products or a combination of some. Some product existed by themselves while other only while in the context of the whole stack. To make this even harder, some customers had their own configurations (i.e. variants of the product(s)).

Testing was essentially being done end-to-end, sometimes at the management software level. Regression testing was being performed manually against each build; as time was scarce, only a subset of tests could be executed; it was simply impossible to execute everything because meanwhile a new build was knocking on the door.

Challenges

We had many challenges, technical and non-technical; in hindsight, our biggest challenge was human related which is also the hardest to overcome, IMO.

Some challenges included:

- lack of communication

- testers seen as the end of the line and the ultimate responsible for quality

- siloed teams, i.e. teams working separately (devs vs testers, testers vs testers, devs vs devs)

- many assumptions

- missing or outdated documentation

- lack of coding/development know-how

- lack of expertise in test automation

- no CI/CD

- very few automation, except for a few scripts

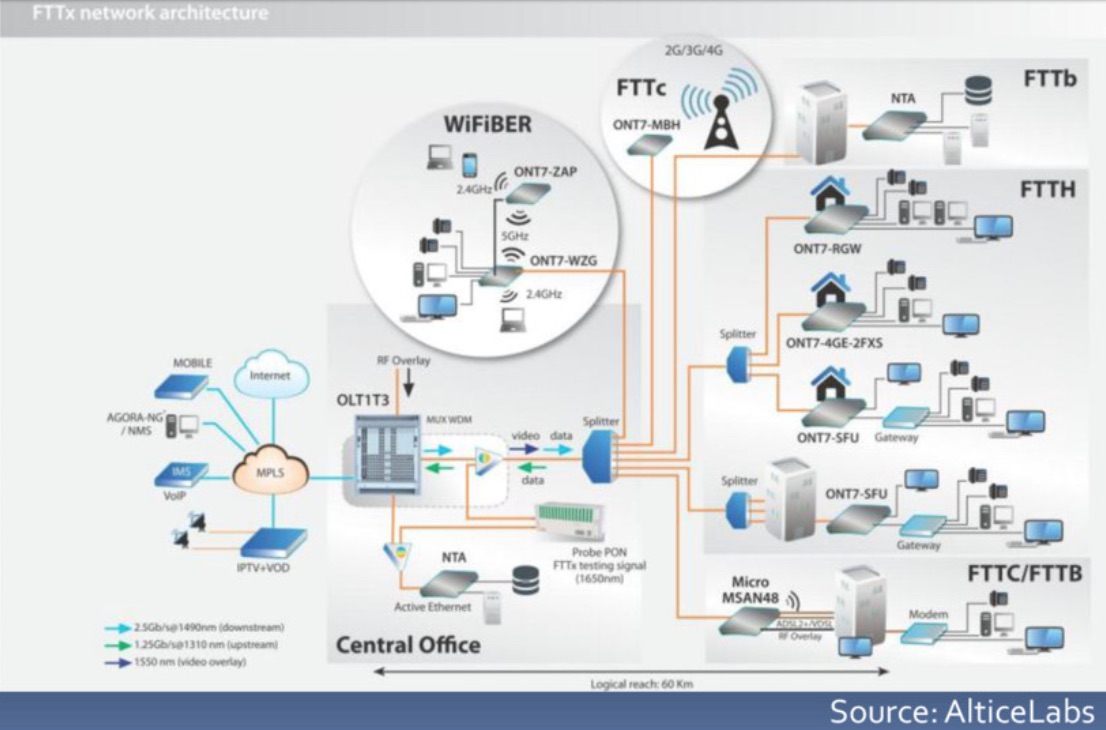

Besides this, our target "solution", or SUT, was too complex and was apparently impossible to automate because we were talking about physical hardware with many physical interconnections, optical and electrical. Some of these networking equipments are not easy to automate from the outside.

Strategy

As we had a strict timeline and we want to show results to all stakeholders (yes, this is probably one of the most important aspects), we needed to be very pragmatic. Thus, and since the team was very small (4, myself included), we defined clearly the SUT and told everyone that we wouldn't be testing everything; it wasn't viable. We also stated that this allowed us to do more things in the future and that this part was essential.

We looked at the whole fiber optics system (customer and operator side) and choose the operating system of the main backend/operator side equipment as the main target for testing. We then looked at all the available interfaces and thought from an automation point-of-view: "Can we use this interface to communicate to the equipment? Can we build an API somehow on top of that? How easy is going to build that API and how stable it will be? What are the cons?".

What seemed to be impossible at first sight, soon became feasible. We were thinking "hmmm, we can interact with that equipment if we do this or if we use that...". Building these layers of communication allowed us to build the foundation where we could build our test automation on.

Coding skills were low at the team; no problem, we all can learn. Ruby was chosen as it is a scripted language quite easy to learn; besides, there are a ton of libraries that can easily extend it to do whatever we need. Thus, the team doesn't have to deal with low-level kind of details and may see results sooner.

Cucumber was used to implement the testing scenarios; we were not adopting BDD but we were taking advantage of the Gherkin syntax and the natural ability of having reusable, executable steps across different scenarios.

Since the team was using Jira, we stopped building out reports manually in Word, PDF, Powerpoint, whatever, and sending them by email; instead, we used Jira and proper dashboards that could be accessed even by C-level roles.

Even so, we met on person with all the team members, from the different teams, to show where we were and the gains we were already obtaining.

In sum,

- simplify our problem, i.e. make our SUT well-defined and remove the moving parts

- build APIs if necessary; abstract the hard parts and make them easy to use

- focus on regression testing

- think on risks and in what risks you're neglecting because your time isn't enough

- mentor other teams for "testability"

- provide real-time visibility of progress

- show what you were not doing before vs what you started to achieve

Lessons learned

- automation can be implemented successfully even on teams with few or no coding skills whatsoever

- documenting, even if briefly, reusable executable steps/keywords can help providing awareness of what's available

- building reusable libraries, with a main responsible/"owner", can motivate and empower people

- using Ruby+Cucumber was a good fit; in general, using languages and frameworks where people feel comfortable and that don't require too much bloatware can help achieve results faster and provide that feeling of progress sooner

- successful automation requires testability as a main concern from the start

If you're going to implement test automation in on-going project that didn't have testability as one of its concerns from the start, it is going to be hard but it's not impossible.

In conclusion

Even though systems may be complex, people are probably the biggest blocker for automation :)

So, if you're going to implement test automation in an on-going project make sure that (in so special order):

- you have good communication between everyone (think on ways of promoting this)

- that quality is something you all agree on and is everyone's responsibility

- everyone is involved in the "test automation project", by sharing goals and tasks

- everyone agrees, as much as possible, with the immediate, mid and long-term goals

- everyone understands the importance of testability

When the previous points are established, you may proceed with the more technical part:

- start simple, decompose your problem and focus your automation efforts

- if you have parts of your (aim to be) CI pipeline that are not automated, work on those (e.g. making sure the build process is fully automated and have no local dependencies)

- don't automate everything or blindly; think from a risk perspective (more on RBT here) and on the repetitive tests/checks that you have to perform on every single build

Learn more

To know more about the details, you can watch the following video presentations; they're a bit old though :) Slide deck is also available. If you want to know more about the challenges we've faced, let me know and I'll be more than glad to go into more details.

Presentation videos follow.

Thanks for reading this article; as always, this represents one point in time of my evolving view :) Feedback is always welcome. Feel free to leave comments, share, retweet or contact me. If you can and wish to support this and additional contents, you may buy me a coffee ☕